The AI Stack Is the New World Order — And Standards Are Its Invisible Layer of Control

Why the global AI framework maps power more than it distributes it.

The Illusion of Alignment

In early 2026, over 90 countries signed onto a global AI framework in New Delhi. On paper, it looked like consensus — a rare moment of coordination in an otherwise fragmented technological landscape.

Look more carefully at what the framework commits its signatories to: broad language on safety and responsible development, a shared commitment to “human-centric AI,” and carefully worded paragraphs on access and inclusion. There are no binding enforcement mechanisms. No shared position on who owns the compute infrastructure that makes AI possible. No resolution to the question of data sovereignty versus global training pipelines. And conspicuous silence on the handful of private companies that make more consequential decisions about AI’s trajectory than most of the governments in that room.

This is not alignment. It is allocation.

The Stack: Where Power Actually Sits — And Why It Stays There

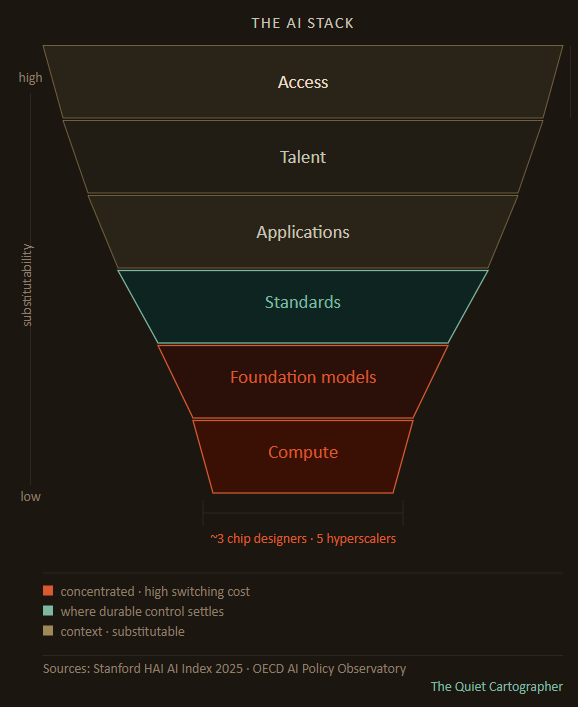

The infrastructure of artificial intelligence runs from the physical to the social — from the chips that process computation to the policies that govern who can access the outputs. Map this as a stack: Compute at the base, then Foundation Models, Standards, Data, Applications, Talent, and Access at the top.

At the base, compute is highly concentrated because the barriers to entry are not financial alone. They are physical. Advanced chip fabrication requires decades of accumulated process knowledge, precision tooling that cannot simply be purchased and replicated, and supply chains with single points of failure. TSMC produces the most advanced chips in the world from one location. NVIDIA’s architecture has become the default inference substrate not through policy but through developer lock-in accumulated over a decade of ecosystem building.

Foundation models occupy the next tier for a related reason: training runs at frontier scale require simultaneous access to compute, data, and talent in concentrations that almost no actor outside a handful of US labs and Chinese state-backed organisations can assemble. The marginal cost of deploying a trained model is low. The fixed cost of training a frontier one is high enough to function as a structural barrier. Most countries’ national AI strategies are, in practice, strategies for deploying models built by others.

Application and access layers are where most countries actually operate — because these are the layers where barriers to entry are lowest and substitution is most possible. A government can change the chatbot it deploys. It cannot easily change whose chips its data centres run on, or whose models its applications call. Substitution is cheap downstream and prohibitive upstream. That is how dependence is locked in. Countries that enter the stack at the application layer are not building leverage in the system. They are deepening their dependence on it.

The Corporate–State Gap

The framework is state-centric. The system is not. This is the structural tension everything else flows from.

Capital concentration, not individual decisions, is what drives the gap. In 2026, global hyperscaler capital spending is estimated at roughly $527 billion. The EU’s AI Act — the most ambitious public regulatory framework in existence — allocated €1 billion for enforcement. That ratio is not a rounding error. It is the relationship between the two systems. Private infrastructure is being built at a scale and speed that no regulatory body, and no framework agreement, is currently equipped to pace.

The result is a dual structure that operates simultaneously: public frameworks articulate intent, private infrastructures determine reality. States negotiate principles. Companies ship systems. Governance frameworks are written against the previous generation of AI capabilities; frontier labs are already training the next one.

This gap is a structural feature of a technology whose development is funded primarily by private capital, whose most capable researchers cluster in a small number of organisations that can offer both resources and peer networks unavailable elsewhere, and whose deployment timelines are set by competitive dynamics rather than diplomatic calendars. Remove any current actor and the structural incentives remain.

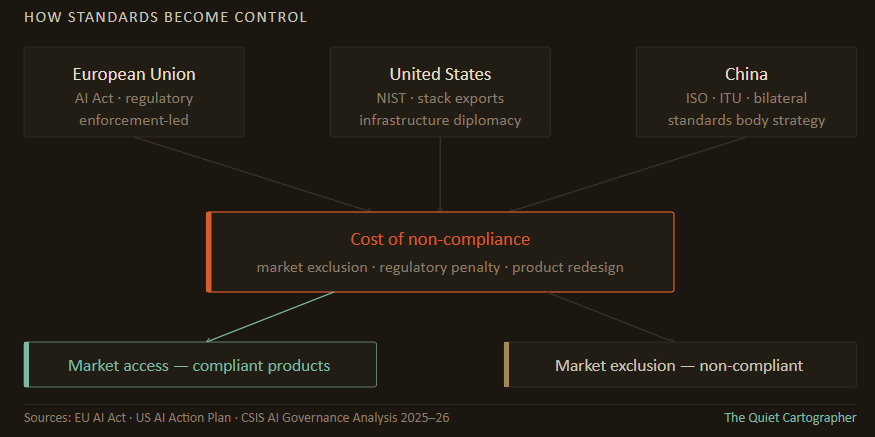

Standards become the contested terrain in this gap — the layer where public intent and private ambition are forced to negotiate, because it is the layer that determines whether private systems can access public markets.

Standards: The Invisible Layer of Control

If compute is the foundation of the AI stack, standards are its binding layer. And they are the layer that receives the least attention in proportion to the influence they carry.

Standards decide what scales—and what doesn’t. But standards only matter when violating them carries a cost higher than opting out of the system. That constraint — the price of non-compliance — is what gives any standard-setting actor real leverage, and it is what distinguishes durable standards power from merely articulated preference.

The entity that defines standards does not need to dominate every layer of the stack. It only needs to make the rules by which layers interact costly to ignore.

This is why the EU’s AI Act, despite Europe’s minimal presence at compute and models, represents a meaningful form of power. Any company selling into 450 million consumers must comply, regardless of where the system was built or trained. Compliance requirements get embedded into global product architectures because market segmentation is expensive — it is cheaper for a frontier lab to build one compliant system than to maintain separate versions. The standard travels with the product.

That mechanism is now under strain. In late 2025, the European Commission proposed pushing compliance deadlines for high-risk AI systems from August 2026 to as late as 2028, and removing AI literacy obligations from providers. Negotiations between Brussels and Washington on adjustments to the framework have been confirmed. Enforcement actions against major platforms are proceeding — and several large players have embedded EU-compliant transparency tools globally rather than segment their products — but the regulatory timeline is being renegotiated under political and competitive pressure before it reached full force. The EU retains meaningful standards leverage. It is exercising it with less authority than its architects intended, against a much faster-moving industry than the framework was designed to govern. A delayed Brussels Effect is a diminished one.

The United States presents a different model, and one whose character has shifted materially in 2025–26. Historically, US standards influence flowed through passive diffusion: NIST frameworks, developer ecosystems, and the default architectures of dominant platforms became global norms not through mandate but through adoption.

That mechanism still operates. But the Trump administration has now layered an active state-backed export programme on top of it. From April 2026, industry consortia can submit proposals to export full-stack AI packages — hardware, models, applications, and cybersecurity infrastructure — to allied and partner countries, with government financing and diplomatic support. The explicit aim is to embed US technology and governance models inside other countries’ digital infrastructure. This is infrastructure diplomacy, and it is the clearest operational expression of standards-as-control the article’s framework describes. Its constraints are real: private sector autonomy means consortia are not obligated to participate, export controls create friction in some markets, and coordination across agencies is imperfect. But the direction is unambiguous.

China’s approach operates through a third mechanism: engagement in multilateral standards bodies — ISO, the ITU — combined with state-financed infrastructure deployment across parts of Asia, Africa, and the Middle East. The strategy does not require China to win the frontier model competition. It requires establishing enough of a footprint in compliant infrastructure that switching costs accumulate for the countries that adopt it. The constraint is a trust deficit in Western markets and an ecosystem that remains substantially isolated from the global developer community. China is building leverage with a subset of the world rather than influence over all of it.

Consensus on the Surface, Fracture Beneath

The New Delhi framework presents areas of apparent agreement: AI safety, the need for broader access, recognition of AI as critical infrastructure. But beneath this rhetorical alignment, the divergences that matter structurally are widening — and they are not all equally consequential.

The divergence that matters most is compute access. Who can train frontier models is determined almost entirely by who can access advanced chips and hyperscale infrastructure. Export controls, chip architecture dominance, and data centre geography create dependencies that no downstream policy choice can override.

A country with no path to frontier compute is structurally dependent on others’ AI, regardless of what governance principles it signs onto.

The divergence over open versus closed models matters, but less than it appears. Open model weights increase access to capable AI, but they do not transfer the ability to train the next generation of frontier systems. Distributing a trained model is not the same as distributing the capability to build one. Open weights are a downstream benefit; compute access is the upstream constraint.

Data sovereignty is real but enforcement-limited. The structural argument — that a country’s citizens’ data should not train models that are then sold back as products — is coherent. But data sovereignty only becomes a decisive lever when it can be enforced at scale and at the model layer, not just at the application layer. Most countries advocating for data sovereignty lack the regulatory infrastructure to enforce it against frontier labs that train on distributed, aggregated datasets assembled across jurisdictions.

The framework’s practical value is not as an enforcement mechanism. It is as a legitimation device — diplomatic cover for countries to pursue their interests while signalling membership in a shared project. The framework will not resolve the divergences that matter. It will become, over time, a venue where countries signal alignment while the structural decisions get made elsewhere.

Positioning Within the Stack — Through the Standards Lens

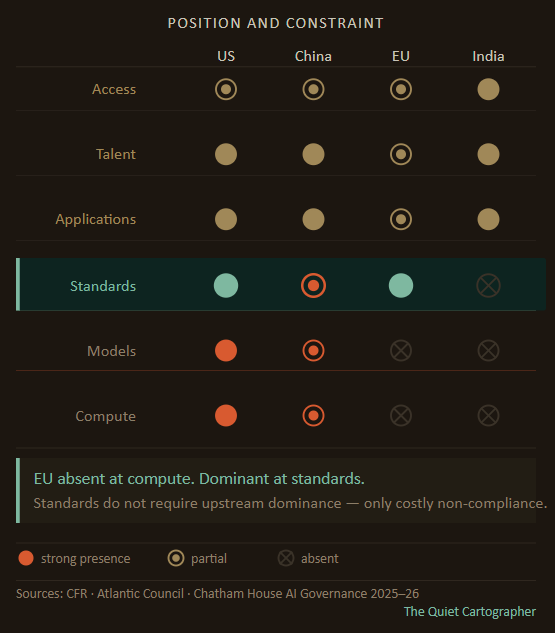

The conventional map of AI geopolitics assigns each major actor a dominant layer: US at compute and models, China building a parallel ecosystem, India contributing data and talent, EU exerting regulatory influence. This is accurate and insufficient. The more diagnostic question is what prevents each actor from expanding its position — because those constraints are what make the current configuration durable.

The United States dominates the upstream layers but is constrained by structural tensions in its own position. Private sector autonomy means the US government cannot simply direct frontier labs to serve national objectives — the relationship is cooperative at best, and the interests of capital-backed labs do not map cleanly onto state strategy. Political cycles create unpredictability in export policy and diplomatic commitments. The full-stack export programme is operationally ambitious but depends on private sector participation it cannot compel.

China has the capital, state coordination, and talent to contest the stack — and is doing so across every layer simultaneously. Its constraint is more precise than simple ecosystem isolation, and the distinction matters. DeepSeek V4, released in preview in April 2026, was simultaneously validated on Nvidia's Blackwell GPU architecture and Huawei's Ascend 950-series inference chips. DeepSeek gave Huawei weeks of early access to optimise for the model while pointedly denying the same courtesy to Nvidia — yet still ensured full CUDA compatibility at launch. This is not the behaviour of a company building a sealed parallel ecosystem. It is the behaviour of a company calculating that the switching cost of abandoning CUDA, with its 75 million-plus downloads and decade-deep developer infrastructure, is too high to pay even while strategically migrating its own inference stack to domestic hardware.

China's frontier labs understand the standards logic the article describes: CUDA is the de facto inference compatibility layer, and non-compliance with it forecloses the global developer community regardless of geopolitical intent. The real constraint is not isolation from the global stack — it is a trust deficit in Western and many non-aligned markets that limits how far Chinese infrastructure can travel, even when the models themselves remain deliberately interoperable.

The EU’s constraint is the most legible: high regulatory ambition, limited enforcement capacity, and minimal presence at the upstream layers that determine what it is actually regulating. It cannot set the pace of frontier development. It can only set the conditions under which frontier development is permitted to operate within its market — and even that authority is being compressed by competitive pressure and its own competitiveness anxiety.

India’s constraint is its position downstream in the stack. Strength at data and talent layers translates into influence only if those assets can be converted into leverage at models or standards — and neither conversion is straightforward without frontier compute access. India’s semiconductor push improves resilience at the compute layer, but remains concentrated in segments that do not yet determine frontier capability. It reduces dependence without shifting the structure of the stack. The New Delhi summit signalled standards ambition without controlling any layer that makes standards binding. The framework does not create the mechanisms to make that positioning structural.

The System Taking Shape

The AI world order is not being negotiated in a single forum. It is being assembled — across layers, across actors, and across competing incentives that do not resolve into any clean equilibrium.

The framework provides a vocabulary. The stack provides the structure. Standards provide the enforcement.

The more useful prediction is not that the system fractures into two clean blocs — reality is messier than that — but that incompatibility across key layers will increase faster than coordination mechanisms can manage it. The US full-stack export programme is already operational. China’s bilateral infrastructure deployment is already accumulating switching costs in its target markets. The EU’s regulatory timeline is already being renegotiated. These are not future developments. They are present dynamics, visible now in investment flows, export licences, and bilateral infrastructure agreements that do not make front pages but are quietly determining which compatibility layer different parts of the world will be built on.

The divergence will not be uniform across the stack. Applications will remain globally distributed and substitutable. Access will remain uneven but not cleanly bloc-aligned. The incompatibility will concentrate at the layers where switching costs are highest: compute architecture, model infrastructure, and the standards that determine which systems can interact with which markets. Even here the picture resists clean binaries — Tesla, a US company, is deploying Chinese AI models in its vehicles for the Chinese market, optimising at the application layer for local compliance while its upstream compute dependencies remain elsewhere entirely. The blocs are forming at the infrastructure layer. At the application layer, the market is still doing what markets do.

Every country that signed the New Delhi framework will face a version of the same choice, on a shorter timeline than most of them appear to have planned for: which system do your developers build against, your regulators reference, your infrastructure depend on? It will become the diplomatic record of a moment when the choice still appeared to be open.

The question is no longer who builds AI. It is who sets the cost of opting out — and that cost is being set right now, layer by layer, in decisions that look like infrastructure and act like geopolitics.

Follow on X: The Quiet Cartographer

References

Stanford HAI AI Index 2025

OECD AI Policy Observatory

EU AI Act and Digital Omnibus proposals

US NIST AI RMF

Trump administration AI Action Plan and American AI Exports Program

China New Generation AI Development Plan

India National AI Strategy

CSIS, CFR, Atlantic Council, Chatham House AI governance analysis, 2025–26

Goldman Sachs AI infrastructure estimates

TSMC and NVIDIA investor materials

When 90 countries sign a framework that lacks any enforcement and says nothing about who owns the computing power, it feels like empty promises. Talking the talk, not walking the walk. The real decisions are happening through investment flows and bilateral infrastructure deals that no one seems to be reporting on. Or maybe even aware of. Who is actually supporting this? Great write up.