Default to Intensity

When measurement replaces meaning

This is Part 5 of a 5-part series examining how systems that cannot measure intent reshape the environments in which decisions are made.

According to The Washington Post (March 2026), the U.S. military struck over 1,000 targets in the first 24 hours of its attack on Iran, leveraging the most advanced AI it has ever deployed in warfare. Processes that previously required sequential stages — collection, analysis, verification, decision — were collapsed into a single continuous pipeline. Detection fed analysis. Analysis fed prioritisation. Prioritisation fed action. The intervals between stages, where interpretation once occurred, compressed toward zero.

This is among the most compressed decision environments observed at scale. This dynamic is not confined to military systems.

The pattern

Across this series, four systems produced different outcomes that pointed to the same underlying mechanism. Seen together, they are not separate cases, but variations of a single pattern.

Part 1: Default to Doom: Why AI Sees the Apocalypse: AI image models trained on large-scale datasets — including LAION-5B — generate outputs skewed toward dramatic, high-intensity content because those datasets over-represent such content (Birhane et al., 2021).

Part 2: Default to Heat: How Algorithms Reward Friction: Engagement-based ranking systems assign disproportionately higher value to high-friction interactions. The Facebook Files (Wall Street Journal, 2021) documented that engagement-based ranking amplified divisive content even when the platform’s own integrity researchers flagged the risk. The open-source release of X’s ranking algorithm confirms weighting asymmetries that structurally favour replies and extended interaction chains over passive agreement.

Part 3: Default to Aggregation: Self-learning AI systems encode the dominant patterns in their inputs. Research published in Nature (Shumailov et al., 2024) demonstrated that recursive training on model-generated data leads to narrowing output distributions, loss of variance, and erosion of the tails — the dissent, the nuance, the minority view.

Part 4: Default to Defence: Strategic systems prioritise measurable capability over unverifiable intent, producing accumulation dynamics documented across multiple contexts — from Cold War nuclear expansion, tracked by the Federation of American Scientists, to contemporary missile defence escalation analysed by the RAND Corporation and the Center for Strategic and International Studies.

Different domains. Different outputs. One mechanism.

Each system selects for signals that can be measured, compared, and optimised at scale. Each excludes what cannot be reliably encoded. And each amplifies, across every iteration, the signals that survived that selection.

What the system cannot process

Visual intensity can be detected through contrast, composition, and labelled metadata. Engagement can be quantified through replies, shares, and dwell time. Capability can be measured through observable assets, deployments, and specifications.

Intent cannot. It cannot be directly observed across actors, standardised into a comparable metric, or incorporated into large-scale optimisation processes. It is structurally excluded — not by design at a single point, but by the requirement that inputs must be measurable to be processed at scale.

This exclusion does not remain isolated. It propagates across systems.

Content surfaced by platforms becomes training data for models. Model outputs shape what users produce. User content re-enters platform environments and is selected again by the same engagement criteria. Strategic systems draw on AI-processed inputs to inform operational decisions. Each stage operates on inputs that have already been filtered by the measurement constraints of the previous stage. The system does not correct for its own emphasis. It compounds it.

The widening gap

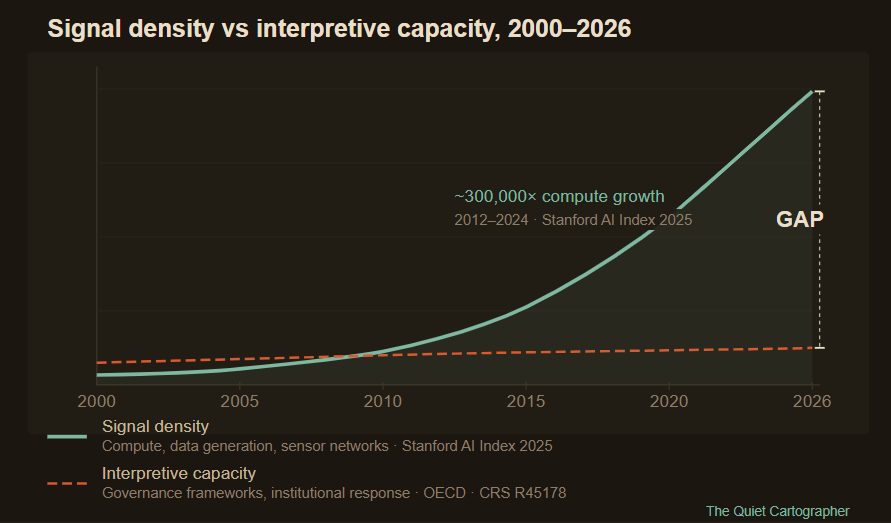

The volume of measurable signals is expanding faster than the capacity to interpret them.

This is not a temporary condition. Advances in sensing, data collection, and machine learning expand what can be captured and processed. Computational capacity increases the speed at which signals are surfaced. But interpretation — the process of assigning meaning to signals in context — depends on institutional processes, human judgment, and conceptual frameworks that develop far more slowly.

The result is a structural gap. The system becomes increasingly effective at generating signals while the ability to understand what they mean does not keep pace.

In information environments, this gap appears as over-representation of high-intensity content relative to the actual distribution of events and opinion. In model training, it appears as narrowing output distributions and reduced variance. In strategic systems, it appears as increasing visibility of capability alongside persistent uncertainty about intent — the condition that drives accumulation dynamics across military systems.

The mismatch, not the malice

The tension visible in the deployment of AI in active operations is often framed as a conflict between caution and urgency. That framing misses the structure.

Companies developing AI systems implement safeguards, usage restrictions, and staged deployment processes. Defence institutions integrate the same capabilities into operational environments where delay carries immediate cost. The friction between them — documented in reported disputes between commercial AI developers and the Pentagon over military use in active operations — is not a disagreement about values. It is a mismatch between the rates at which different parts of the system respond to the same structural condition.

One part attempts to slow integration to allow for interpretation and governance. Another operates under conditions where the system penalises delay. Neither is irrational. Both are locally rational responses to an environment that does not wait for alignment between them.

Capabilities that can be measured and deployed enter the system as soon as they are available. Interpretation, regulation, and shared understanding follow later — if they emerge at all.

What compression produces

As the system accelerates, the signals that persist are those that can be measured consistently across layers. The signals that attenuate are those that depend on context, interpretation, or intent.

They do not disappear. They become less visible and less influential.

The apparent range of opinion narrows when only high-engagement content propagates. The perceived level of conflict increases when friction-weighted systems surface disagreement. Strategic assessments skew toward worst-case interpretation when capability is visible and intent is not. Decision-makers across domains operate on inputs that are systematically filtered — not by any single actor’s choice, but by the structure of every system they rely on.

None of this requires failure. None of it requires malicious design. It follows from the interaction of measurement, optimisation, and scale — operating simultaneously, across every layer, with no mechanism to restore what the selection process removes.

The argument, completed

Systems amplify what they can measure. Across multiple domains, and across decades of documented system behaviour, this holds.

The distortions this produces are visible and increasingly well understood. Image models default to doom. Platforms default to heat. Self-learning systems default to aggregation. Strategic systems default to defence.

What is less visible — and what this series has been building toward — is the second-order effect.

When these systems operate simultaneously, each feeding the next, the aggregate environment changes. The inputs available for decision-making are not simply biased. They are systematically stripped of the signals that cannot survive measurement at scale: nuance, dissent, restraint, intent. What remains is intensity — not because anyone chose it, but because intensity is what measurement selects for, at every layer, without exception.

You cannot introduce intent into such a system as a stable variable without fundamentally changing what it can process. You cannot slow the expansion of measurable signals from within any single layer. And yet decisions across every domain — cultural, political, economic, military — increasingly depend on outputs generated within this environment.

The primary risk is not that systems default to intensity. It is that they do so faster than the processes required to interpret those signals can keep pace.

The question is no longer whether systems amplify what they measure.

It is how judgment operates in a system that cannot reliably recognise it.

Follow on X: The Quiet Cartographer

Additional Sources

Excellent points. I would say that there is a characteristic human time scale that limits the full system, if intent is to be preserved & if understanding is to be created, maintained, grow. And understanding is generated by participating in the process of creation, going beyond what can be explicitly and formally expressed and measured … thanks for expressing this clearly.