Default to Heat: How Algorithms Reward Friction

Why calm loses, and what that does to public discourse

This is Article 2 of our 5-part series exploring why AI, social media, and strategic systems tend to amplify extremes and shape what we perceive.

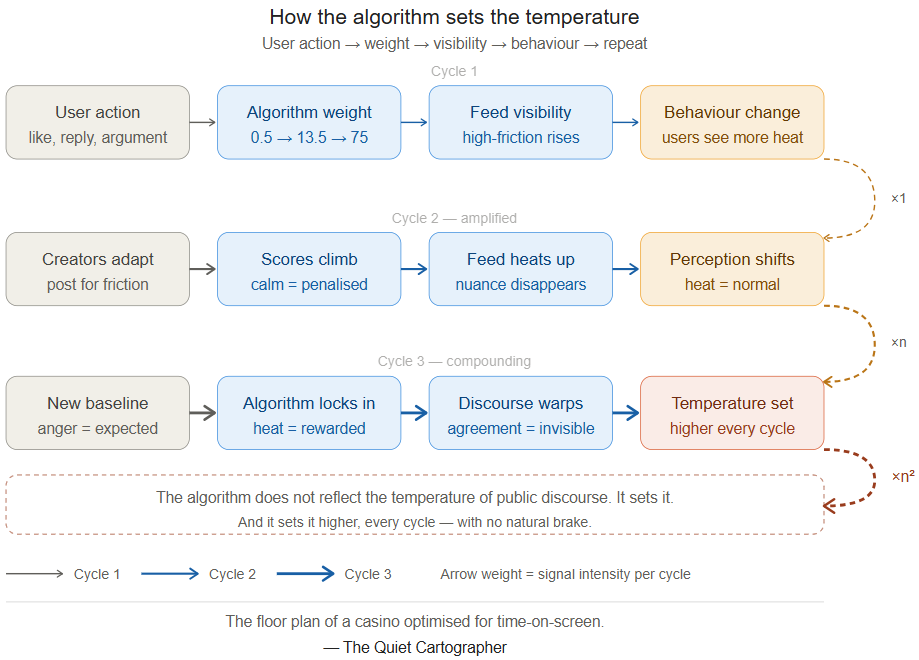

A like is worth 0.5 points. A reply chain where the author engages back is worth 75. A platform doesn’t calculate truth — it calculates heat.

What the numbers actually mean

The asymmetry is the story. A like is a signal of passive approval — you saw something, agreed with it, moved on. A reply is friction: you had a reaction strong enough to make you stop and type. The algorithm values that friction at 27 times the value of agreement.

The 75-point reply chain is the sharpest number in the table. When the original poster engages back — when a back-and-forth develops — the algorithm assigns that exchange a score 150 times higher than a like. Not because anyone designed a system to reward conflict specifically. Because the algorithm has no mechanism to read why two people are still talking. It reads only one signal: they are still on the platform.

This is the structural point. The algorithm is not biased toward anger. It is biased toward whatever keeps people typing. High-friction interactions—disagreement, argument, provocation—tend to sustain longer interaction chains than agreement. The system does not distinguish between types of engagement. It rewards duration. The outcome — amplified heat — is not a design intention. It is a structural consequence of optimising for a single variable: time-on-platform.

The casino floor

A casino does not need to decide who becomes addicted. It designs for stimulating games that are most visible, most accessible, and most rewarding — because maximum engagement is the operational objective. The house does not distinguish between a player who is having fun and one who is chasing losses. It measures one thing: time on the floor.

Social platforms are casino floors optimised for time-on-screen. The algorithm is the floor plan. Slots near the entrance. Bright lights on the tables with the highest action. The calm games tucked away where the serious players sit, rarely surfaced to the crowd.

The people who built these systems were not trying to amplify outrage. They were solving an engineering problem: maximise engagement. Outrage was the emergent solution. That distinction matters — because it tells you that replacing the executives changes nothing. The structure produces the outcome regardless of who is running it.

Upstream signal — Facebook Files (2021)

Internal research, later revealed through the Facebook Files (2021), showed that Facebook’s engagement-driven ranking systems could amplify divisive and polarizing content at scale. These findings were known within the company and presented to leadership. While some mitigations were explored, the platform did not fundamentally alter its engagement-optimised architecture, even when internal research suggested negative effects on public discourse. The broader lesson is not about Facebook alone—it is about what happens when an institution optimizes aggressively for a single metric. Systems tend to produce the outcomes their metrics reward, regardless of stated values.

X’s transparency — publishing the algorithm weights openly — is genuinely unusual. Most platforms keep these numbers proprietary. The irony is that the transparency does not change the underlying dynamic. Knowing the weights does not make the casino floor less engineered. It just lets you see the floor plan.

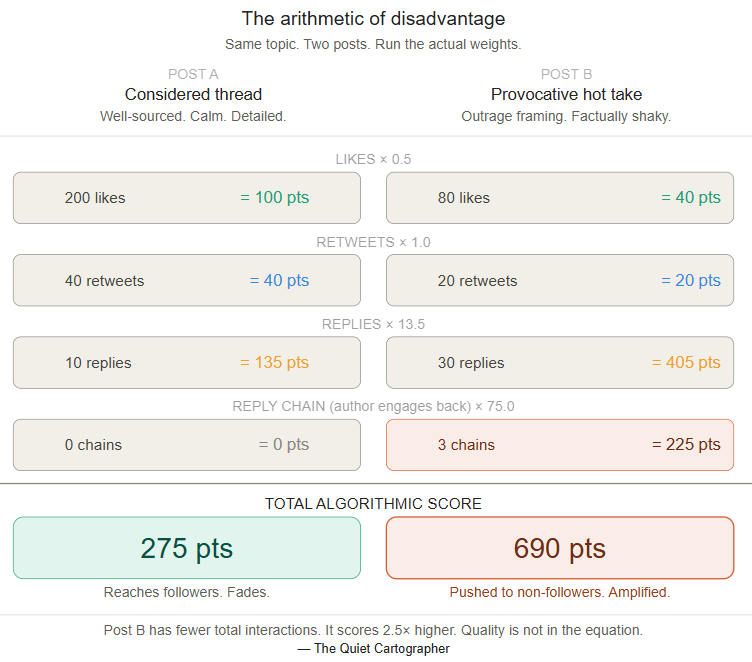

The arithmetic of disadvantage

This is the section most commentary skips. The algorithm’s bias toward heat does not just amplify disagreement — it structurally disadvantages anything that produces agreement, calm, or considered reflection. Not because of any explicit penalty. Because the scoring system simply does not value those qualities.

Post B has fewer total interactions. It scores 2.5 times higher. The quality of the argument is not in the equation. Epistemic quality is not suppressed. It is simply not counted. This is not a bug in the system. It is the system working exactly as designed. Even though the weights are illustrative, based on publicly shared components of X’s ranking system, and the exact values evolve, the asymmetry is consistent.

The information environment is not neutral. It is systematically tilted against epistemic quality — not by intention, but by arithmetic.

What the feed is, and isn’t

Your social media feed feels like a sample of what people are thinking and saying. It is not. It is the subset of human thought and expression that produces the highest friction, surfaced and re-surfaced by an algorithm that has been optimising for this outcome continuously since the platform launched.

The calm voices exist. The considered arguments exist. The careful threads exist. They are systematically scored lower, surfaced less, and reach fewer people than the posts that generate heat. Over time, what surfaces shapes what feels like consensus, what feels like the range of acceptable opinion, and what feels like the intensity of disagreement in the world.

The algorithm does not reflect the temperature of public discourse. It sets it.

This is the same structural mechanism identified in Part 1 with AI image generation: a system optimised for one measurable signal — visual intensity, engagement friction — produces outputs that amplify that signal at the expense of everything the signal cannot measure. Calm. Nuance. Accuracy. Agreement.

The compounding problem

The loop does not stay still. As the algorithm surfaces more high-friction content, users are exposed to a feed calibrated toward heat. Their sense of what is normal, what is contested, and what is worth engaging with shifts accordingly. They produce new content in response to what they see. That content — shaped by an already-amplified environment — re-enters the system. The algorithm scores it. The cycle continues.

Each iteration, the baseline shifts. What felt provocative in 2010 is unremarkable in 2026. The floor of acceptable intensity has risen, not because people have become angrier, but because the system has continuously rewarded anger and continuously disadvantaged calm. The escalation is structural, not volitional.

The algorithm does not reflect the temperature of public discourse. It sets it. And it sets it higher, every cycle.

If you understand what the system rewards, you understand what you are seeing—and what you are not.

Next in the series

Part 3 — Default to Aggregation. Now imagine a self-learning AI watching all of this — every reply chain, every escalation, every sustained argument. It does not have morals. It does not have context. It learns patterns. And the patterns it sees, overwhelmingly, are heat. What happens when the system that amplifies human bias starts training on the output of another system that already amplified it? Next, we follow the logic one level deeper: into the AI that watches the feed, learns from it, and speaks back in the same language the feed rewarded.

Follow on X: The Quiet Cartographer