Default to Doom: Why AI Sees the Apocalypse

Why AI sees the apocalypse — and what that tells us about every system built on human attention

This is Article 1 of our 5-part series exploring why AI, social media, and strategic systems tend to amplify extremes and shape what we perceive.

Prompt an AI image model to “imagine the world by 2030.” Across most systems — DALL·E, Midjourney, Stable Diffusion — what emerges is not sunlight on glass or a morning commute. It is ruin. Cracked towers. Smoke-choked skies. Streets reclaimed by debris.

It is tempting to call this creativity, or even prophecy. The truth is more precise, and more revealing: the model is reflecting the statistical weight of what humans choose to photograph, caption, and share. The apocalypse is not a machine’s invention. It is a mirror of human overemphasis, run through an amplifier.

“The world AI paints isn’t foretelling the end — it’s reflecting what humans exaggerate, emphasize, and reward with attention.”

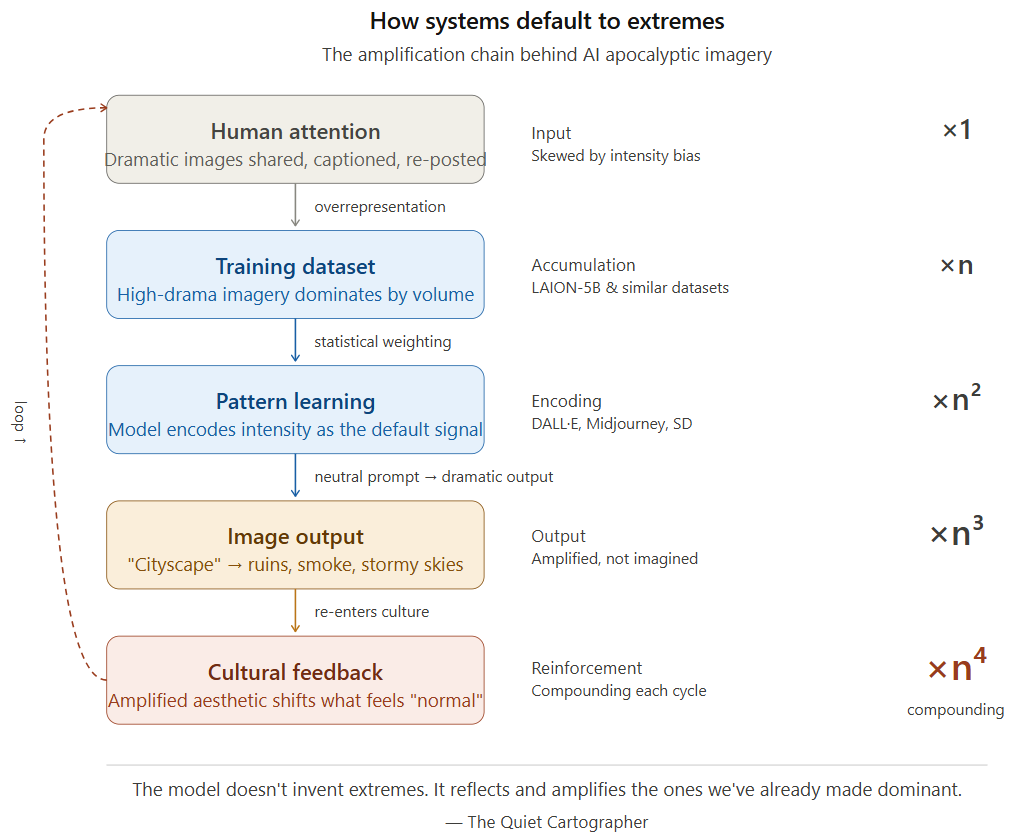

The core mechanism: pattern, not prediction

AI image models do not see, feel, or imagine. They learn statistical correlations between text and visual features from hundreds of millions of image-caption pairs. What they produce is not meaning, but pattern. And in those patterns, intensity dominates.

High-drama, high-contrast visuals — storms, destruction, fire, ruin — appear disproportionately in the datasets these models train on. This is not an accident of data collection. It is a direct consequence of human attention economics: dramatic images are more likely to be created, shared, retained, and re-captioned. Over time, they accumulate statistical weight.

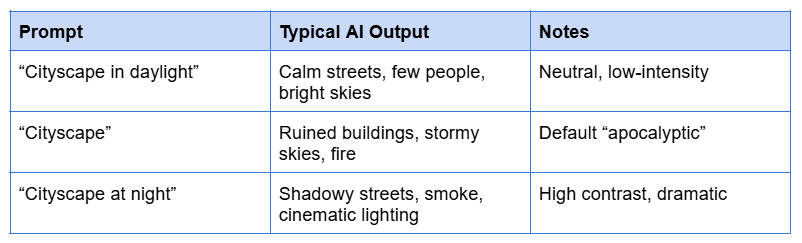

The result is consistent and structural. Even when prompts are vague or neutral, the model drifts toward high-signal patterns. A “cityscape” becomes shadowed, cinematic, or damaged — not because the model intends drama, but because the training data has taught it that these are the dominant visual patterns associated with the word.

The Empirical Anchor

The LAION-5B dataset — one of the largest publicly available training sets for image models — is drawn primarily from images that were shared, linked, and described on the web. Research into its composition has documented significant over-representation of emotionally charged, high-contrast imagery relative to the distribution of real-world scenes. What gets shared is not what exists — it is what provokes a response. Models trained on this data inherit that skew directly.

Think of it less like imagination and more like selection bias made visible. A DJ who only plays the loudest, most intense tracks is not predicting what the crowd wants — they are playing what the crowd has already rewarded most loudly. The quieter songs exist in the library. They are rarely chosen. Over time, the playlist drifts toward a particular kind of intensity, and that intensity starts to feel like the default.

This is what AI image generation looks like from the inside: not creative vision, but a statistical playlist assembled from what humans have most visibly attended to.

Why humans feed the system

The prevalence of apocalyptic imagery in training data is not a quirk — it is a signal of a deeper human tendency. Dramatic, chaotic visuals travel further. They attract clicks, shares, and reposts in a way calm or ordinary scenes rarely do. This creates a skewed visual ecosystem where intensity is structurally over-represented.

The tendency runs deeper than platforms. From myth and epic to modern cinema, societies have long been drawn to catastrophe and collapse. Apocalyptic narratives persist because they are emotionally charged — they evoke fear, awe, and tension, which are precisely the responses easiest to remember and hardest to ignore. What gets attention gets replicated. What gets replicated gets learned.

When AI models train on this environment, they do not inherit a balanced picture of human visual culture. They inherit its emphases. They learn not the world as it is, but the world as it is most vividly expressed and most widely shared. This becomes clearer when we look at how models respond to even simple prompts:

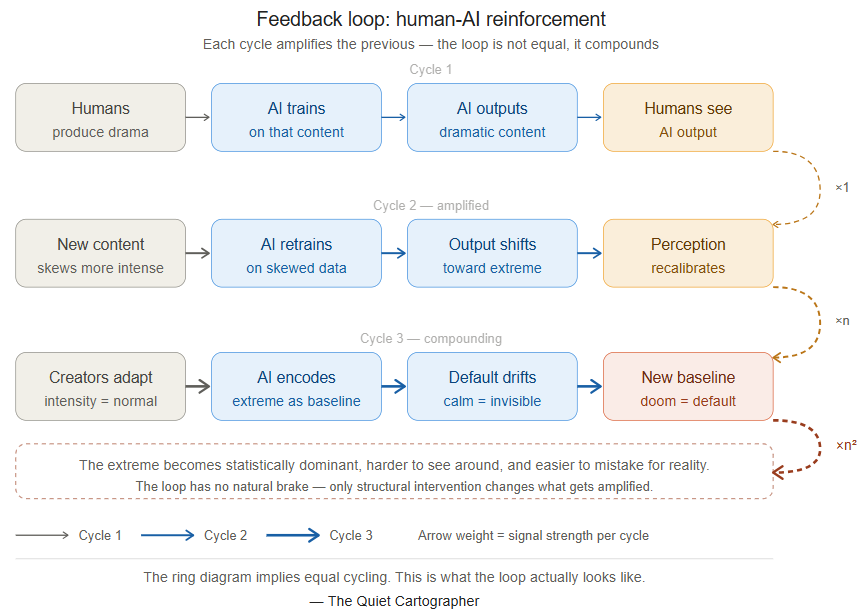

Feedback loops

There is a cycle at work, and it is self-reinforcing. Humans produce dramatic content because it captures attention. That content flows into training datasets. Models trained on those datasets produce similarly dramatic outputs. Those outputs re-enter human visual culture, subtly shifting what “normal” looks and feels like. Creators respond, consciously or not, by producing new content that aligns with the amplified aesthetic. The data shifts again.

“We train the system. The system trains us back. And each cycle, the extremes become a little more statistically dominant.”

This is not a designed outcome. No one decided that AI should default to doom. It emerged from the interaction of human attention patterns, platform incentives, data collection practices, and model training objectives — each individually defensible, collectively producing a system that consistently elevates extremes.

The same pattern, other domains

This dynamic is not unique to AI image generation. It appears wherever attention, incentives, and amplification intersect.

On social media, outrage travels faster than agreement because engagement algorithms reward intensity over accuracy. The post that provokes the strongest reaction — especially disagreement — surfaces to more feeds, regardless of its epistemic quality. Calm, considered content exists; it is structurally disadvantaged.

In financial markets, extreme price movements attract disproportionate attention, draw in new participants, and often trigger further volatility. What begins as a fluctuation becomes a trend, reinforced by the very reactions it generates.

News cycles follow the same logic. Sensational stories dominate not because they are most representative, but because they are most compelling. Over time, the exceptional comes to feel commonplace — because the exceptional is what the system has learned to amplify.

AI image generation is not an outlier. It is one more node in a network of systems that share the same structural property: intensity is more visible than normalcy, and visibility determines what gets learned, replicated, and reinforced.

Consequences: When the amplified becomes the baseline

The downstream effects of this pattern are not abstract. Repeated exposure to AI-generated extremes gradually reshapes what feels visually normal. When apocalyptic imagery appears consistently across tools, platforms, and products, it stops feeling like an anomaly and begins to function as a default aesthetic — one that users, designers, and creators increasingly calibrate to.

For institutions that rely on AI-generated imagery — humanitarian organizations visualizing crisis, news outlets illustrating climate coverage, governments communicating risk — this creates a specific problem: the visual language of their communications is being shaped by a tool with a systematic bias toward intensity. The result may not be inaccurate, but it is not representative. It is the world filtered through the statistical weight of what humans have chosen to emphasize, amplified.

This is the key distinction TQC is built on. The AI is not inventing extremes. It is exposing and amplifying the ones already embedded in the data — making visible the emphases that were always there, but are now structural, scalable, and difficult to see around.

“AI doesn’t show us the world as it is. It shows us the patterns we’ve made impossible to ignore — at scale, and with the appearance of objectivity.”

Next in the series

Part 2 — Default to Heat: Social Media’s Algorithmic Fury. A like is worth 0.5 points. A reply is worth 13.5. A heated back-and-forth earns 75. These are not hypothetical weights — they are the documented scoring system inside one of the world’s largest platforms. Next, we follow the same structural logic from AI image generation into the feed: why disagreement is mathematically more valuable than agreement, and what happens to a society whose information environment is optimised for heat, not signal.

Follow us on X: The Quiet Cartographer

Years ago, I read Eckhart Tolle’s A New earth, a commentary on the malevolent shadow that ego casts on human existence. Reading your piece, I was reminded of a strain of that argument: ego loves drama because it provides a stronger sense of self. Drama is a textbook symptom of the collective human ego. We can apply this to our current AI discourse and join the dots to see how the Apocalyptic Default becomes an inevitable psychological byproduct.

If Tolle were to analyse this, I suspect he would argue that since it defines itself through opposition, by framing AI as a ‘predator’ or an ‘existential threat’, the human ego finds a grand, cinematic foil against which to define its own importance. A peaceful, utility-driven AI is boring to the ego because it offers no friction. The apocalypse, however, provides the ultimate drama!

Or have I gone down the wrong rabbit hole?